Strategy Deployment, Foxes and Hedgehogs

Foxes and hedgehogs are two types of thinkers. Hedgehogs focus on one big idea and foxes explore many options. Strategy deployment requires a blend of both.

Karl Scotland – Using Agility Strategically

Karl Scotland – Using Agility Strategically

Browsing Tag

Foxes and hedgehogs are two types of thinkers. Hedgehogs focus on one big idea and foxes explore many options. Strategy deployment requires a blend of both.

A high level introduction to Estuarine Mapping, with an overview of how the process can be used alongside a Strategy Deployment approach.

An alternative pattern for visualising the typology of transformation tactics using triads, inspired by the triads used for Estuarine Mapping.

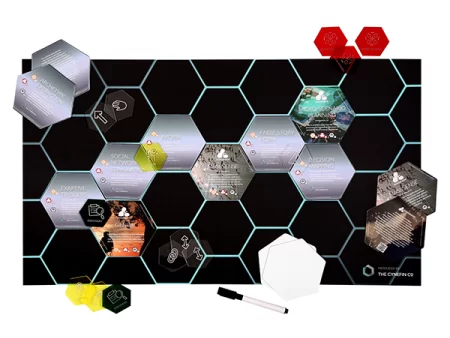

A proposal for a Cynefin Company Hexi Method Kit for Strategy Deployment, with an initial idea for what would be on the various Hexis.

At the start of the year Mike Burrows posted about an idea he called Reverse Wardley, with some background to where it came from. As one of the sources of the idea I thought I should say some more about my thinking that led to it. Mike has also called the approach Option Visbility, and in writing this post I am …

There has been much debate online, and in particular on Twitter recently, about the imposition of Agile and the Agile Industrial Complex. See Ron Jeffries’ recent blog for more context. It’s an important topic. I have seen plenty of imposed Agile which I would call Incoherent Agile. Agile processes imposed as Best Practice without any coherence or alignment with the …

Dave Snowden recently posted a series of blog posts on A Sense of Direction, about the use of goals and targets with Cynefin. As the X-Matrix uses measures in two of its sections (Aspirations and Evidence) I found that useful in clarifying my thinking on how I generally approach those areas. Lets start by addressing Dave’s two primary concerns; the …

I’ve recently become an Agendashift partner and have enjoyed exploring how this inclusive, contextual, fulfilling, open approach fits with how I use Strategy Deployment. Specifically, I find that the Agendashift values-based assessment can be a form of diagnosis of a team or organisation’s critical challenges, in order to agree guiding policy for change and focus coherent action. I use those italicised terms deliberately as they come from Richard Rumelt’s book Good Strategy/Bad Strategy …

Within SAFe, PI Planning (or Release Planning) is when all the people on an Agile Release Train (ART), including all team members and anyone else involved in delivering the Program Increment (PI), get together to collaborate on co-creating a plan. The goal is to create confidence around the ability to deliver business benefits and meet business objectives over the coming weeks and months …

I have written a number of posts on systemic thinking, which I believe is at the heart of Kanban Thinking. I’m currently referring to systemic thinking, rather than Systems Thinking, in order to try and avoid any confusion with any particular school of thought, of which Systems Thinking could be one. I have also written an number of posts on Cynefin. This …