A Powerful True North Requires A Good AIM

Find out how to identify your organisational True North with the AIM framework and a simple process that enhances alignment, motivation, and strategic clarity.

Karl Scotland – Using Agility Strategically

Karl Scotland – Using Agility Strategically

Browsing Tag

Find out how to identify your organisational True North with the AIM framework and a simple process that enhances alignment, motivation, and strategic clarity.

One of my main influences in the field of Strategy is Richard Rumelt. After referencing Good Strategy / Bad Strategy, I am now being inspired by The Crux.

The Scrum Guide Expansion pack includes an Appendix on Emergent Strategy. This is my feedback and suggestions based on my Strategy Deployment work.

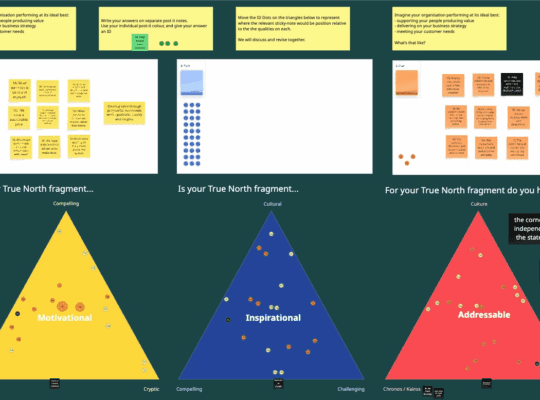

A True North is a shared story that people should be able to connect to, that resonates with them, and that articulates a real sense of purpose and direction.

Jim Benson and I will be at Kanban Edge on March 6th in London and are teaching our Making Strategy Visible workshop again the day after the conference.

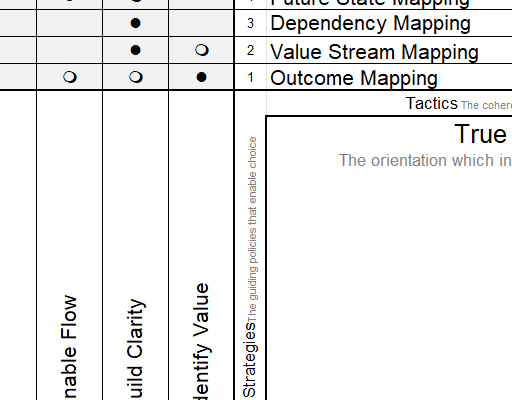

The book Flow Engineering describes an approach to creating flow through value stream mapping, and which can be described in terms of Strategy Deployment.

Over the last few months I’ve been collaborating with Jim Benson, putting together an online training course on the X-Matrix and Collaborative Strategy.

People usually use Pragmatic Agile to describe “Bad” Agile. However, Pragmatic philosophy can explain why Pragmatic Agile is actually “Good” Agile.

An Obeya and X-Matrix go hand in hand. An Obeya enhances an X-Matrix with further information, and the X-Matrix provides a structure for all the information.

Over the next couple of months there are three particular Lean Agile events that I’m involved in which I’d love to see people at!